RepEval2019

The Third Workshop on Evaluating Vector Space Representations for NLP

June 6th 2019, Minneapolis (USA)

(co-located with NAACL)

The Third Workshop on Evaluating Vector Space Representations for NLP

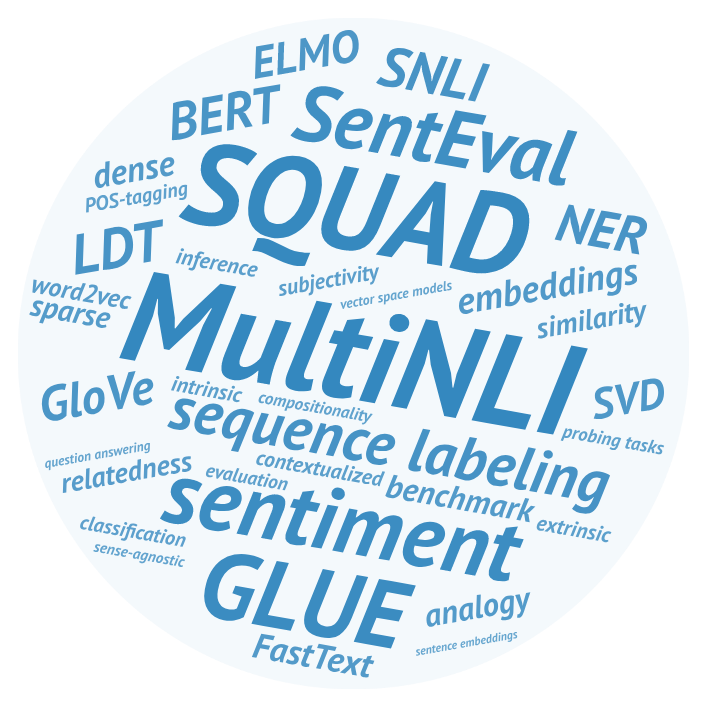

General-purpose dense word embeddings have come a long way since the beginning of their boom in 2013, and they are still the most widely used way of representing words in both industrial and academic NLP systems. However, the issue of intrinsic metrics that are predictive of performance on downstream tasks, and can help to develop better representations, is far from being solved. At the sentence level and above, we now have a number of probing tasks and large extrinsic evaluation datasets targeting high-level verbal reasoning, but there is still much to learn about what features make a compositional representation successful. Last but not the least, there are no established intrinsic methods for newer kinds of representations such as ELMO, BERT, or box embeddings.

The third edition of RepEval aims to foster discussion of the above issues, and to support the search for high-quality general purpose representation learning techniques for NLP. We hope to encourage interdisciplinary dialogue by welcoming diverse perspectives on the above issues: submissions may focus on properties of embedding space, performance analysis for various downstream tasks, as well as approaches based on linguistic and psychological data. In particular, experts from the latter fields are encouraged to contribute analysis of claims previously made in NLP community.